Using AWS to Create a Simple and Effective Disaster Recovery Strategy

Using AWS to Create a Simple and Effective Disaster Recovery Strategy

Amazon Web Services is an Industry-leading cloud computing platform that hosts many cloud-based services. One of their oldest and most popular services is S3, which stands for Simple Storage Service.

AWS S3 is a cloud object store that stresses scalability, availability, and durability. S3 is designed for 99.999999999% durability, which means that there is a probability of 99.999999999% that your object will remain intact and be accessible after a period of one year. This level of durability is very desirable when storing important files that you could need at a moment’s notice. When AWS says that S3 is highly available, what they mean is your data is stored in 3 different Availability Zones, which are distinct locations within a region that are engineered to be isolated from failures in other Availability Zones. This ensures that if even 2 of the 3 AZ’s are compromised, your data is still safely stored in the cloud.

Another feature of S3 is the ability to encrypt your files in the cloud many ways. You can set up your files to be encrypted on the client side before they are uploaded, or to be encrypted on the server side after they are uploaded. When using Server-Side Encryption, you have the choice to let S3 manage the encryption key, let AWS Key Management Service manage the encryption key, or you can even upload your own Client Master key and manage the keys yourself.

Not only is S3 extremely durable and highly available, but it also is one of the most cost-effective cloud storage options. Your first 50 TB of storage on S3 will cost you $0.023/GB per month, which is a very small fee to pay to have a copy of your files on the cloud. Because S3 is durable, highly available, and cost effective, it makes it a great place to redundantly store large backups in the cloud.

Many organizations already have their databases and servers set up to automatically create backups of their system on some scheduled interval. This is a great practice to make sure you do not lose all your important information if your servers go down. The pitfall that many organizations have is that they store these backups on the same internal servers. What happens if your internal network is compromised? You may lose the backups you took too!

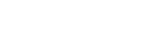

One way to get around this potential pitfall is to leverage the AWS Command Line Interface (CLI) and S3 to automatically push your backups to the cloud. This can be scheduled to run daily, weekly, or monthly depending on the requirements of your organization.

Once you have your backups automatically being pushed to the cloud, you can take advantage of S3’s Lifecycle policies to make sure you are only storing the most recent backups that are relevant for your organization. Lifecycle policies can be set up to delete backups after a certain time period, or to move your backups to S3 Glacier, which is an even more cost-effective storage service, to be archived in case of an emergency. This enables S3 to manage its own data and removes the need for someone to manually maintain the backups stored in the cloud.

The benefit of setting up automatic uploads of your backups to the cloud is it prepares your organization for any scenario that could cause you to lose access to your servers or databases. This is a simple, cheap, and pro-active way to prepare your organization for the worst-case scenarios they could happen. In the event of a hardware failure, natural disaster, or any other scenario that could wipe your servers, this will have you prepared to get another instance of your environment up and running in no time. If your organization is ever faced with one of these scenarios, this will save you a ton of time and money spent trying to recover you server or database files. By maintaining a copy of your backups on the cloud, this will ensure that your backups are always readily available in case of emergency and will leave you better prepared for any scenario.

Checkout our webinar to learn more about AWS Use Cases here!